Filebeats settings6/28/2023

Written in Go and based on the Lumberjack protocol, Filebeat was designed to have a low memory footprint, handle large bulks of data, support encryption, and deal efficiently with back pressure. You can read more about the story behind the development of Beats and Filebeat in this article. Filebeat is, therefore, not a replacement for Logstash, but it can (and should in most cases) be used in tandem. In an ELK-based logging pipeline, Filebeat plays the role of the logging agent - installed on the machine generating the log files, tailing them, and forwarding the data to either Logstash for more advanced processing or directly into Elasticsearch for indexing. Filebeat, as the name implies, ships log files. Winlogbeat, for example, ships Windows event logs, Metricbeat ships host metrics, and so forth. Each beat is dedicated to shipping different types of information. What Is Filebeat?įilebeat is a log shipper belonging to the Beats family: a group of lightweight shippers installed on hosts for shipping different kinds of data into the ELK Stack for analysis. The simple reason for this being that it has incorporated a fourth component on top of Elasticsearch, Logstash, and Kibana: Beats, a family of log shippers for different use cases and sets of data.įilebeat is probably the most popular and commonly used member of this family, and this article seeks to give those getting started with it the tools and knowledge they need to install, configure, and run it to ship data into the other components in the stack.

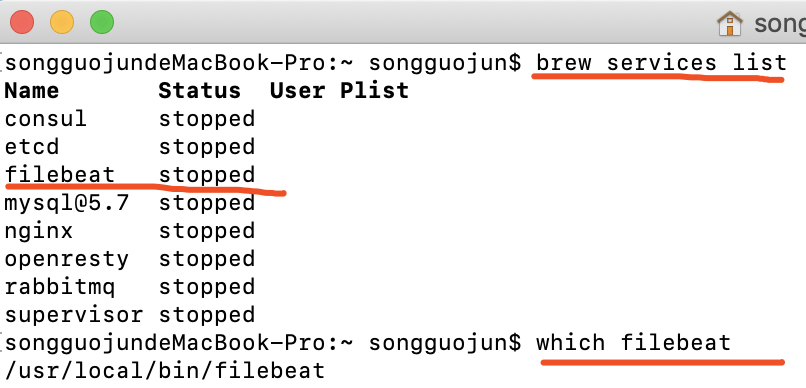

Why there are no error messages if something is wrong because of which documents are not getting indexed? I should be getting some error if things are not right.The ELK Stack is no longer the ELK Stack - it's being renamed the Elastic Stack.Log file showing logs for filebeats setup and filebeats running: Index file is getting created with 0 documents: INFO - 15:10:54 -> Database Error: A Database Error OccurredArray INFO - 15:10:36 -> Database Error: A Database Error OccurredArrayĮRROR - 15:10:54 -> Query error: Expression #5 of SELECT list is not in GROUP BY clause and contains nonaggregated column 'rvice_centres.district' which is not functionally dependent on columns in GROUP BY clause this is incompatible with sql_mode=only_full_group_by INFO - 15:10:36 -> SELECT DISTINCT service_id, brand, activeĮRROR - 15:10:36 -> Query error: Expression #1 of SELECT list is not in GROUP BY clause and contains nonaggregated column '' which is not functionally dependent on columns in GROUP BY clause this is incompatible with sql_mode=only_full_group_by My custom log file log-.php is: INFO - 15:10:26 -> index Logging details have been captured for employee. Applications/MAMP/htdocs/247around-adminp-aws/application/logs/log-.logĪs can be seen, it is majorly default. I did not get any error when I setup filebeats or run filebeats post setup.īelow is the filebeat.yml: filebeat.inputs: I could not find anywhere in the filebeats document if there are any specific steps need to be taken to ensure indexing takes place for the custom log files. But when I actually try to use it with my application specific log file, index is created with 0 documents. I am successfully running filebeat with pre-built modules like mysql, nginx etc. I am trying to index my custom log file using filebeat.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed